This is a modified version of a lab written by Alex Clarke at the University of Regina Department of Computer Science for their course, CS315. Any difficulties with the lab are no doubt due to my modifications, not to Alex's original!

This lab is an introduction to Fundamental OpenGL Functions

After the lab lecture, you have one week to:

Before you begin this lab, create a new template project like you did in the first lab - like the first one before you added triangle code. You will complete the project by following the instructions in this lab's notes instead.

OpenGL is an operating system and hardware platform independent graphics

library designed to be easily portable yet rapidly executable. Unlike

Direct3D, which is only available on PC, Xbox 360 and Windows Mobile OS, OpenGL

is available on a wide variety of hardware platforms and operating systems

including Unix/X11 (Linux, Irix, BSD, Solaris), Mac OS X, and Microsoft Windows

98 to Windows 8. The embedded version, OpenGL ES, is available on many hand

held devices including iPhone OS and Android OS devices. A Javascript version

of OpenGL ES 2.0 called WebGL 1.0 is an official part of the HTML 5

specification. A massive update to OpenGL coming soon in the form of Vulkan,

which was officially released in February 2016. The first games appeared soon after, with a few major releases adding official support over summer 2016.

var gl; //Generic variable, intended to hold WebGL RC objectThe Rendering Context object is acquired with the getContext function built into HTML5 canvases. This function may also provide access to other types of renderers. To be sure that the function is called correctly on older web browsers, and to handle cases where it fails, Google's cross browser webgl-utils.js provides setupWebGL() which wraps getContext().

//without webgl-utils:

//find canvas by id name

var canvas = document.getElementById( /* your canvas element's id goes here */);

//get webgl RC and do some minimal error checking

try {

gl = canvas.getContext("webgl" /*, optional attributes */ );

} catch (e) {

try {

canvas.parentNode.innerHTML("Cannot get WebGL Rendering Context");

// could also try alternatives like webgl-experimental or moz-webgl

} catch (e) {

// ???

}

}

//with webgl-utils

var canvas = document.getElementById( /* your canvas element's id goes here */);

gl = WebGLUtils.setupWebGL(canvas /*, optional attributes */);

Once you have a rendering context, all your interactions with WebGL will be through the object. This means that if your rendering context object is called gl all WebGL calls will begin gl.. WebGL constants are also defined as members of the rendering context object. For example, in this lab you will see:

Functions Constants

=============== ==============

Data Management gl.createBuffer() gl.STATIC_DRAW

gl.bindBuffer() gl.DYNAMIC_DRAW

gl.bufferData() gl.STREAM_DRAW

gl.ARRAY_BUFFER

Shader Management gl.getAttribLocation()

gl.enableVertexAttribArray()

gl.vertexAttribPointer()

gl.getUniformLocation()

gl.uniform*()

Built-in Settings

gl.clearColor() gl.FRONT

gl.clearDepth() gl.BACK

gl.cullFace() gl.FRONT_AND_BACK

gl.frontFace() gl.CW

gl.enable() gl.CCW

gl.CULL_FACE

gl.DEPTH_TEST

gl.POINT_SMOOTH

Draw gl.clear() gl.LINES

gl.drawArrays() gl.LINE_LOOP

gl.LINE_STRIP

gl.TRIANGLES

gl.TRIANGLE_STRIP

gl.TRIANGLE_FAN

gl.POINTS

gl.COLOR_BUFFER_BIT

gl.DEPTH_BUFFER_BIT

Other

gl.FLOAT

gl.UNSIGNED_BYTE

gl.TRUE

gl.FALSE

For a full list see the section on The WebGL Context in the WebGL Specification

Before you can do any drawing you need to tell WebGL what to do with the things you tell it to draw. You do this with shader programs. WebGL uses OpenGL ES Shading Language 1.0 as its shader programming language. It is a modified version of GLSL 1.2. Shader programs consist of a minimum of two parts: a vertex shader and a fragment shader.

You may also have heard of two other shader types: geometry shaders, tesselation shaders, and compute shaders. Geometry shaders were introduced in OpenGL 3.2 Core Profile, tesselation shaders were introduced in OpenGL 4.0 and compute shaders were introduced in OpenGL 4.3. They are optional in all versions of OpenGL and are not available at all in WebGL, so they will not be covered in these labs.

You will send lists of vertex information into a vertex shader. This information represents attributes of the vertex that can change from one vertex to the next such as colour and position.Vertex shader inputs are simply marked in.

When we are done manipulating and creating shader properties, we pass the results along to the fragment shader through outputs labelled with the out modifier. The vertex shader outputs for the vertices in the same primitive will be interpolated across the primitive - if they weren't all the same their values will vary from fragment to fragment.

Below is our first vertex shader. Replace the vertex-shader in your template with this vertex shader code:

in vec2 vPosition; //receives incoming vertex positions

out vec4 color; //passes the colour of this vertex to the fragment shader via rasterizer

void main()

{

//Add default z and w coordinates to the incoming position and pass it on.

gl_Position = vec4(vPosition, 0.0, 1.0);

//Colour every vertex red

color = vec4(1.0, 0.0, 0.0, 1.0); //colour channels are red, green, blue and alpha

}

This vertex shader only has one input which represents a 2D coordinate for the vertex. This coordinate is in a 2 component vector which has a base type of float. Vertices can be moved around in space, coloured, and lit by the vertex shader. You will explore many of these things later. For now, our vertex program will only provide a colour for the vertex. This colour is hard coded and will be the same for all vertices. You will learn how to change this colour programmatically later on.

Our first vertex shader uses two outputs as well. You can see the declaration for a 4 component vector, vec4, for colour, and we use the built-in output gl_Position, which is also a vec4, which is why the shader adds two more components to vPosition when we assign it to gl_Position.

All vertex shaders have a second built-in output, gl_PointSize which controls the size of points. It's value controls the point's width in pixels.

The fragment shader gets data that is blended from each vertex that makes up the primitive being drawn. This could be a position somewhere between each vertex, a texture lookup coordinate, or a blended colour. For now our shader will ignore the built-in inputs and simply copy the incoming colour to the screen.

Replace the fragment-shader in your template with this fragment shader code:

precision mediump float;

in vec4 color; //The blended fragment colour from the vertex shader.

//Names of in variables to a fragment shader must match

//an out variable from the vertex shader.

void main()

{

gl_FragColor = color;

}

This fragment has one input for the interpolated colour. It is important that names for the inputs you create in a fragment shader match the name of an output you create in the vertex shader.

There is a built-in 4 component output vector, or vec4, called gl_FragColor that you should set in most fragment shader programs to provide the colour output for the fragment shader. If you don't, the output colour will be undefined.

In addition to gl_FragColor, fragment shaders have three more varying inputs:

// Load and compile shaders, then use the resulting shader program

var program = initShaders(gl, "vertex-shader", "fragment-shader" );

gl.useProgram( program );

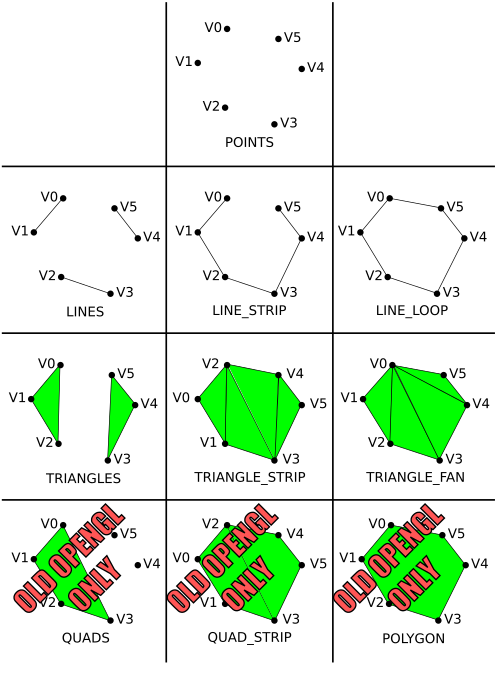

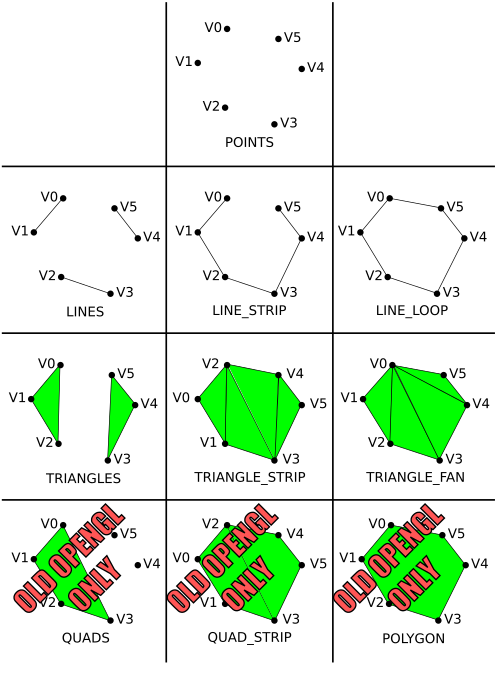

Your graphics hardware has limited ability to represent geometry. Most hardware only knows how to render triangle primitives. Everything else is built up using triangles. Older versions of OpenGL included some other shapes that might have been supported by some specialized hardware, such as convex polygons and quadrilaterals, but that support has been removed from most newer versions. Below is a diagram showing the different primitive types or modes:

Drawing with one of these types is controlled by a drawArrays

or drawElements

function. The drawArrays function tells your rendering context to

begin drawing a certain number of elements from a list of vertex data that has

already been loaded into an array buffer object and connected to appropriate

shader inputs. The drawElements is similar, but requires an

additional element index buffer that allows you to access the data in the

vertex arrays out of order - this is the last you'll hear of

drawElements in the labs. Regardless of which you use, to be able to

draw you will need to know how to load vertex data into a buffer, and how to

attach it to a shader attribute.

Basic WebGL rendering primitives are made up of

lists of vertices.

Vertex data can be two, three of four dimensional. An extra dimension

is sometimes necessary to properly move vertices around in space.

Vertex data is most often represented with the vec2, vec3, and vec4 data types in

the shader. These are 2, 3 and 4 component floating point structures.

This data should be uploaded from javascript arrays of 32-bit floating point type. For example, the following two dimensional array gives the 2D coordinates

for the three vertices in a triangle:

//Triangle positions var points = new Float32Array ([ 0.9, 0.9, 0.9, 0.0, 0.0, 0.9 ]);

The number of coordinates provided per vertex should match the vec type

specified on the position input of the shader you are using. If it doesn't, it may be padded to fit.

The textbook's MVnew.js file defines Javascript classes for the vec2, vec3 and vec4 data types. The following code is identical to to the array above, but uses the vec2 class:

//Triangle positions var points = [ vec2( 0.9, 0.9), vec2( 0.9, 0.0), vec2( 0.0, 0.9) ];

You can use either form interchangeably, but I prefer to use arrays of vec* classes because they are compatible with the math functions provided by Dr. Angel, and provide an easy way to add and remove points with .push() and .pop() functions. This would allow you to easily write functions to create arbitrarily large arrays, like this one for making circles with radius of 1:

function circle(sides)

{

var vertices = []; // create empty array

if (sides < 3)

{

console.log("function circle: Not enough sides to make a polygon.");

}

else

{

if (sides > 10000)

{

sides = 10000;

console.log("function circle: Sides limited to 10,000.");

}

for (var i = sides; i >= 0; i--)

{

vertices.push(vec2(Math.cos(i/sides*2*Math.PI), Math.sin(i/sides*2*Math.PI)));

}

}

return vertices;

}

They are also easily concatenated with the concat method, which comes in handy for packing multiple drawable objects into one buffer.

Once you have some vertex data, you need to load it into buffers. Each array can be loaded into a separate buffer, or all the arrays can be packed into the same buffer. You will find examples of both in various code samples in your textbook. For now, we will use two separate buffers: one for vertex positions and one for vertex colours.

To create a buffer, you use the createBuffer (similar to the

OpenGL ES glGenBuffers

command. createBuffer() creates a valid buffer name which you

must bind to load with buffer data or attach to a shader attribute.

WebGLBuffer createBuffer()

Once you have a buffer name, you bind it with bindBuffer.

A buffer is not allocated until you bind it the first time.

void bindBuffer(GLenum target, WebGLBuffer buffer);Where target indicates what type of data the buffer holds - either ARRAY_BUFFER or ELEMENT_ARRAY_BUFFER - and buffer is a valid buffer name generated with createBuffer().

You will use the target type ARRAY_BUFFER for storing all vertex data in these labs.

With the buffer bound, you are ready to load data into it with glBufferData. This function comes in two forms form 1 specify buffer size and initialize with 0s, and form 2 initialize from a data source - like a flat array.

Here is how we would load our sample triangle position data. Since we will only load the data once, place this code in init after the shader loading code:Form 1:

void bufferData(GLenum target, GLsizeiptr size, GLenum usage);Form 2:

void bufferData(GLenum target, BufferDataSource data, GLenum usage);Where:

- target indicates what type of data you are sending (you will always use ARRAY_BUFFER),

- size is the size of buffer to allocate in bytes,

- data is a reference to a flat (contiguous, non-pointer) array, like the Float32Array above,

- usage indicates how the data will be used.

Since you will likely use your buffers for drawing simple geometric objects, you will generally specify the STATIC_DRAW usage type. If you plan to update the buffer frequently, you might want to specify DYNAMIC_DRAW. If you plan to use the buffer infrequently you should specify STREAM_DRAW. A buffer's data may be updated with another call to bufferData() or with a call to subBufferData(). If you plan to update only a portion of a buffer's data, consider using glBufferSubData.

//*** Position buffer **********************

// Create a buffer for vertex positions, make it active, and copy data to it

var positionBuffer = gl.createBuffer();

gl.bindBuffer( gl.ARRAY_BUFFER, positionBuffer );

// Use this form for Float32Array data

//gl.bufferData( gl.ARRAY_BUFFER, points, gl.STATIC_DRAW );

// Use this form for arrays of arrays or of vecs

gl.bufferData( gl.ARRAY_BUFFER, flatten(points), gl.STATIC_DRAW );

To get a reference to a shader input you use getAttribLocation.

GLint getAttribLocation(WebGLProgram program, DOMString name);Where program is a valid, compiled shader program, and name is a character string containing the name of the desired shader input.

If name does not refer to a valid input in the specified shader program, the returned result will be -1. WebGL restricts shader names to a maximum of 256 characters and trying to request on with a longer name will also result in -1.

To enable the shader input you use enableVertexAttribArray.

void enableVertexAttribArray(GLuint index);Where index is a valid value returned from getAttribLocation.

To attach the currently bound buffer to a shader input you use vertexAttribPointer.

void vertexAttribPointer(GLuint index, GLint size, GLenum type, GLboolean normalized, GLsizei stride, GLintptr offset);Where:

- index is a valid value returned from getAttribLocation.

- size is the number of components being sent per vertex. Must be 1, 2, 3, or 4.

- type is the data type of the components. You will usually FLOAT, but for colours UNSIGNED_BYTE is helpful because it allows you to specify values from 0 to 255 instead of 0 to 1.

- normalized is for integer data and doesn't apply to the type FLOAT, so use FALSE with FLOAT type. It will cause integer type data to be mapped to a float with values between 0 and 1, which is how shaders expect colours to be formatted.

- stride indicates the number of bytes between values that apply to this shader input. This allows us to send interleaved data. If your values are tightly packed, use 0 as your argument.

- offset is the offset in bytes of the first relevant component in your buffer. If you are using separate buffers for each vertex attribute (color, position, texture coordinate, etc), this should be 0.

The purpose of the size and type arguments is to describe the data being sent to the shader. If the original data doesn't match what's asked for in the shader, it will be converted for you. In fact, all vertex attributes are converted to size 4. If y or z are missing, they become 0, and if w is missing it becomes 1. You can then define an attribute in the shader of a different size depending on your need.

Here is how we will attach the sample triangle position buffer to the "vPosition" input of the shader:

//Enable the shader's vertex position input and attach the active buffer

var vPosition = gl.getAttribLocation( program, "vPosition" );

gl.enableVertexAttribArray( vPosition );

gl.vertexAttribPointer( vPosition, 2, gl.FLOAT, gl.FALSE, 0, 0 );

Finally, to draw things, use drawArrays.

To draw the sample triangle place this code in the draw function:

void drawArrays(GLenum mode, GLint first, GLsizei count);Where:

- mode is what primitive to draw with

- first is what vertex to start at in the array you loaded

- count is how many vertices to draw.

gl.clear( gl.COLOR_BUFFER_BIT );

gl.drawArrays( gl.TRIANGLES, 0, 3 );

If you have done everything to this point you should see a red

triangle in the upper right corner of an otherwise white rendering

canvas. Now it's time to experiment with different drawing modes. Place a screenshot in your lab document.

Three different line primitives can be created:

Some WebGL implementations let you control the width of lines with lineWidth(). Most Macs implement the minimum range of line widths-, 1.0 to 1.0. You may find that your PC allows more.

Try this points array with each of the above triangle types; Place a screen shot of each into your lab document

//Triangle

var points =

[

vec2( 0.0, 0.0 ),

vec2( 0.5, 0.0 ),

vec2( 0.5, 0.5 ),

vec2(-0.5, 0.5 ),

vec2(-1.0, 0.0 ),

vec2(-0.5,-0.5 )

];

It may be hard to see why you get the results you observe. Consider the

order the points are defined and how triangles are defined for each

triangle type. So far our shader has used a hard coded colour. You can change this colour in a running program in one of two ways: uniforms and attributes. These are explained below.

All our colours will be in RGBA format - Red, Green,

Blue, Alpha. Alpha is an extra term used in blending operations. You can think of it as "transparency", but it can do more than that. The alpha channel will be ignored in our programs this week.

A uniform is a shader value that has a constant value during a draw

operation, but can be changed between draw operations

with WebGL commands. Uniforms can be declared in vertex and fragement shader programs.

In your shader code, a uniform is declared next to input varyings or attributes like this:

uniform type uniformName;

//eg: a 4 component colour uniform

uniform vec4 uColor; //copy this to your colour output

You get access to a uniform in much the same way as a vertex array input, but you use getUniformLocation:

WebGLUniformLocation uniformLocation = rco.getUniformLocation(shaderProgram, "uniformName"); //eg: get the colour from the example above for use in lab sample code var uColor; //Getting uniforms can be slow, so make this global uColor = gl.getUniformLocation(program, "uColor"); //And put this in init.You change the value of a uniform with glUniform*() type functions. The * represents the format of the uniform you are changing and has two or three parts:

| In Shader | Matching uniform*() function |

|---|---|

| float | uniform1f |

| int | uniform1i |

| vec2 | uniform2f

or uniform2fv |

| vec3 | uniform3f

or uniform3fv |

| vec4 | uniform4f

or uniform4fv |

gl.uniform4f( uColor, 1.0, 1.0, 0.0, 1.0 ); //Yellow var yellow = vec4( 1.0, 1.0, 0.0, 1.0 ); //Yellow gl.uniform4fv( uColor, flatten(yellow));

These work just like vertex position arrays. You will need to set up a second array input to your vertex shader, create a colour array, load it into a buffer and attach it to your shader. Here are samples of all threer.:

The following code defines an attribute input called vColor. It is similar to the code used for vPosition. You should assign the value in vColor to the color output:

attribute vec4 vColor; // Per vertex colour input

//for initial triangle var colors = [ vec4(1.0, 0.0, 0.0, 1.0), //Red vec4(0.0, 1.0, 0.0, 1.0), //Green vec4(0.0, 0.0, 1.0, 1.0), //Blue ]; //for later triangle types example var colors = [ vec4(1.0, 0.0, 0.0, 1.0), //Red vec4(0.0, 1.0, 0.0, 1.0), //Green vec4(0.0, 0.0, 1.0, 1.0), //Blue vec4(1.0, 1.0, 0.0, 1.0), //Yellow vec4(0.0, 1.0, 1.0, 1.0), //Cyan vec4(1.0, 0.0, 1.0, 1.0), //Magenta ];

Then copy the colour data to a buffer, like this:

//*** Colour buffer **********************

// Create a buffer for colour positions, make it active, and copy data to it

var colorBuffer = gl.createBuffer();

gl.bindBuffer( gl.ARRAY_BUFFER, colorBuffer );

gl.bufferData( gl.ARRAY_BUFFER, flatten(colors), gl.STATIC_DRAW );

//Enable the shader's vertex colour input and attach the active buffer

var vColor = gl.getAttribLocation( program, "vColor" );

gl.enableVertexAttribArray( vColor );

gl.vertexAttribPointer( vColor, 4, gl.FLOAT, gl.FALSE, 0, 0 );

The process is very similar to the position buffer set up. I have highlighted the differences in red.

The colour buffer and depth buffer are usually cleared each time you begin drawing to the OpenGL window. The values you use to clear with rarely change, so they are often set in the initialisation step with the clearColor and clearDepth functions:

gl.clearColor(0.0, 0.0, 0.0, 1.0 ); //clear colour is black

gl.clearDepth(1.0); //Clear to maximum distance

The actual clearing happens just before you draw. In your main draw

routine, you specify which buffers to clear with the gl.clear

function:

gl.clear(gl.COLOR_BUFFER_BIT | gl.DEPTH_BUFFER_BIT);

In this lab you will be drawing 2D objects. When you draw in 2D (or you are doing 3D CAD work) you should use a special geometry transformation that does not cause shape or size distortion. This transformation is called orthographic projection. In the last lab we wanted a 3D effect with foreshortening so we used perspective projection. Perspective transformation makes it hard to place things precisely on the screen. Shapes are distorted toward the edges and corners, and their apparent size varies with their distance from the camera. With orthographic projection you can precisely control how coordinates map to the drawing area, and objects render the same way regardless of distance.

This week, we will use only simple normalized device coordinates - our

drawing space will lie between (-1,-1) in the lower left corner and (1,1) in

the upper right. If you are using 3D coordinates, then -1 is the nearest

possible Z coordinate, and 1 is the farthest. Things do not appear smaller with

distance. Next week, when you learn to do perspective() projection

and other transformations, you will also see the textbook's ortho()

functions which can give you control over how coordinates are mapped to the

window when you don't do perspective.

In the last two sections we've discussed how to clear the depth buffer, and the default range of depth values. Perhaps you'd also like to know how to specify 3D vertices and do depth testing.

Without depth testing, objects appear on the screen in the order you draw them. If you want to draw something behind another thing you have already drawn, you need to turn on depth testing, supply depth values with your vertex coordinates, and clear the depth buffer each time you start drawing.

In more detail:

gl.enable(gl.DEPTH_TEST);

//TRIANGLES var points= [ vec3( 0.0, 0.0,-0.5 ), vec3( 0.5, 0.0,-0.5 ), vec3( 0.5, 0.5,-0.5 ), vec3( 0.0, 1.0, 0.0 ), vec3( 0.0,-1.0, 0.0 ), vec3( 1.0, 0.0, 0.0 ) ]; var colors= [ vec4( 1.0, 0.0, 0.0, 1.0 ), // Triangle 1 is red vec4( 1.0, 0.0, 0.0, 1.0 ), vec4( 1.0, 0.0, 0.0, 1.0 ), vec4( 0.0, 1.0, 1.0, 1.0 ), // Triangle 2 is cyan vec4( 0.0, 1.0, 1.0, 1.0 ), vec4( 0.0, 1.0, 1.0, 1.0 ) ];

If everything works, the cyan triangle in this example appears behind the red, even though it is drawn second. In the default coordinate system, larger z values are farther away. With depth testing off, the cyan triangle would be in front of the red one.

It is good to get a feeling for where you can put points on the scene.

The following instructions are meant to get you started from one of the template projects provided on the lab schedule. Your lab instructor will probably do a. through c. during the lab demo:

//Explicitly set clear color to black or a colour you like

//Load, compile and use a shader

//Load the simple triangle position data near the top of the notes into a buffer

//Bind the buffer to your shader's vPosition input

vec2( 0.99, 0.99), vec2(-0.99, 0.99), vec2(-0.99,-0.99), vec2( 0.99,-0.99),

gl.drawArrays(gl.LINE_LOOP, 3, 4); // Start at the fourth vertex, draw four vertices

/12 - Draw a picture that contains at least three of the various OpenGL primitives. It should look very different from any in-lab demonstrations.

/6 - Use at least 3 different colours. Do this with a uniform shader variable or a vertex color array input.

/4 - Use at least two point sizes. A uniform must be set up to allow you to set the point size from Javascript.

/4 - Write code that would draw at least two different line sizes. Tell me in a comment whether or not it worked, and on what OS+graphics card combination.

/10 - Artistic impression - your drawing should resemble something and reflect some effort.